Major general-purpose AI services such as GPT, Claude, and Gemini are expected to develop into infrastructure that provides AI functionality to all. In contrast, when individual companies and individuals utilize AI, it is becoming almost certain that AI agents will be used as a means to apply AI to individual applications and tasks. AI agents are not simply automation; they have the ability to autonomously make situational judgments and flexibly execute tasks according to the situation, following the user’s general instructions. A vision of a future where AI permeates every corner of society, through general-purpose AI and AI agents tailored to individual tasks, is shared by many people.

However, will AI truly work as humans intend? “AI governance” is being discussed as a framework to ensure that AI operates correctly and does not cause unintended harm to humans. Currently, the focus seems to be mainly on aspects such as legal systems and operational rule-making. Mindware Research Institute proposes incorporating an AI-human interface into this framework.

Current AI is realized through a technology called deep learning, which uses artificial neural networks. For many years, it ran on small networks, but in recent years, it has been scaled up to reach the level it is today. However, the problem that the internal processing of deep learning is a black box and incomprehensible to humans remains unresolved (i.e., its safety has not been confirmed), yet enormous investments are being made and practical applications are progressing rapidly. It is well known that prominent AI researchers are feeling a sense of crisis about this situation and are leaving major AI companies one after another, as has been reported.

AI technology includes not only deep learning, but also a family of explainable AI technologies such as Self-Organizing Maps (SOMs) and Bayesian Belief Networks (BBN). Mindware Research Institute argues that it is more important to make AI “controllable” by combining deep learning with these explainable AI technologies, rather than relying solely on deep learning. While LLM makes it appear as if AI understands human language, the problem here is whether the AI will act according to the commands given by humans in words. Therefore, it is necessary to communicate with the AI using mathematical methods rather than natural language. This is what explainable AI can do.

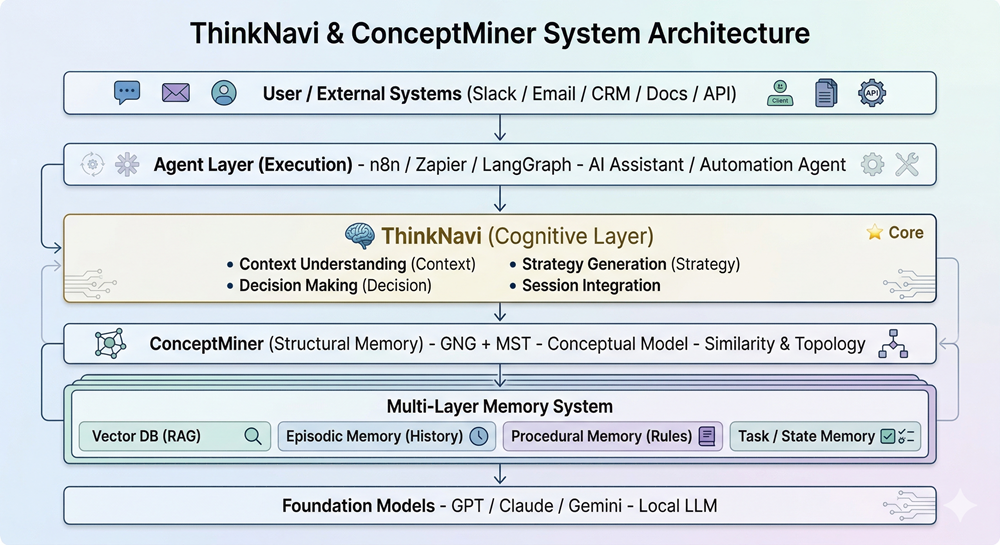

At Mindware Research Institute, we decided to use an advanced form of SOM called Growing Neural Gas (GNG) as an explainable AI that communicates with other AI using thousands of dimensions of embedding vectors. This is because traditional SOMs have limitations in summarizing ultra-multidimensional data due to their fixed two-dimensional topology. However, like SOMs, GNGs also represent the “concepts” contained in the data. Furthermore, they can be used to define human “will” or “strategies” at any node in the model. When a deep learning AI reads these, mathematical communication between the AI and humans is established. MSTs (Minimum Spanning Trees) are used as edges connecting the nodes of the GNG, thereby determining the graph structure.

GNG+MST functions as a structured “long-term memory” for each individual user. This provides the foundation for making appropriate decisions based on past memories in response to new data input. For example, when receiving an email from a customer, the goal is to look at past interactions with that customer and respond accordingly. Current AI agents may be able to make very simple decisions, but reaching the level of decision-making based on past memories requires a structured memory model, which is not currently implemented in AI agents.

In the future, general-purpose AIs such as LLMs will likely retain memories of individual users, but it is considered dangerous to entrust all decision-making to general-purpose AI. It is essential for users, such as companies, to implement their own strategic models in a format that is interoperable with AI. Mindware Research Institute helps AI users proactively utilize AI and make it controllable.